DeepSeek Janus Pro is interesting for a different reason than DeepSeek V3 or R1.

V3 and R1 became famous because of large-scale language reasoning and deployment discussions. Janus Pro matters because it tries to solve a harder product problem:

How do you build one model family that can handle both multimodal understanding and image generation without treating those as completely separate systems?

The best place to start is the official Janus repository.

What the Official Repo Says

The Janus repository describes the Janus series as:

- unified multimodal understanding and generation models

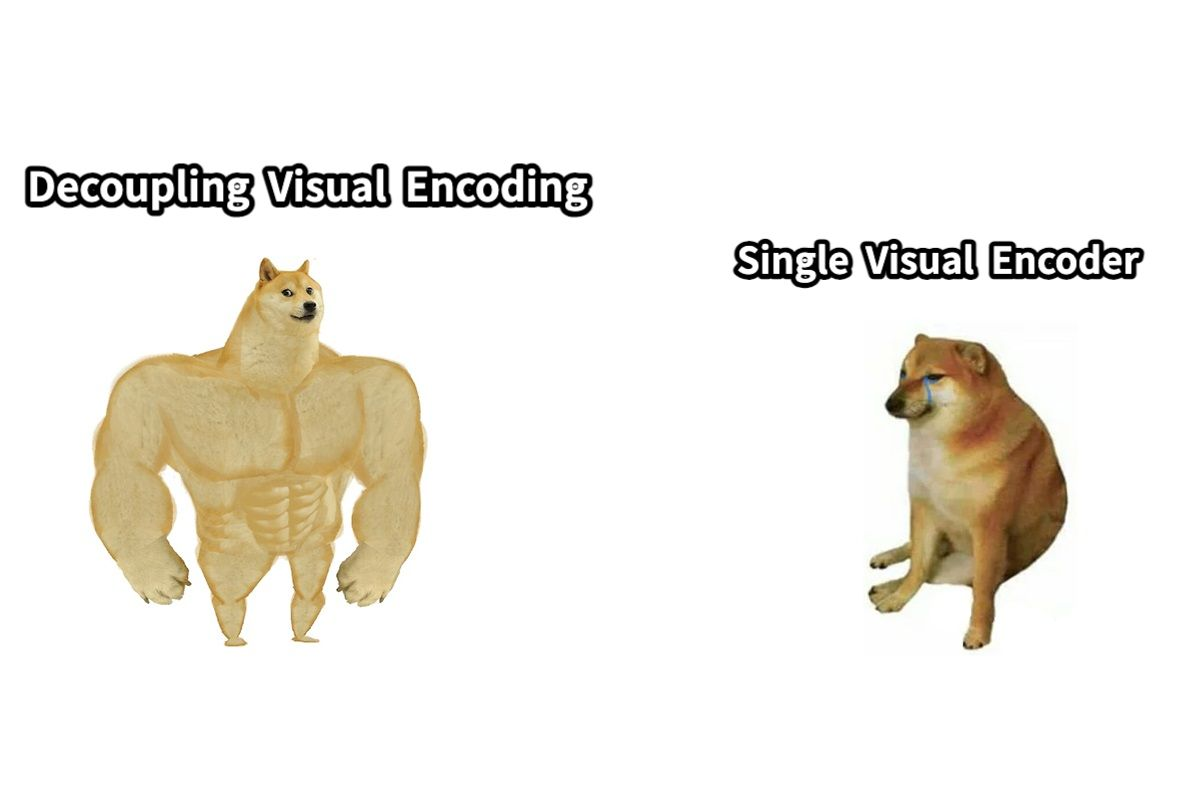

- built around a decoupled visual encoding design

- intended to separate visual understanding and visual generation pathways while keeping the overall architecture unified

That last part is the most important. The repository is not marketing Janus Pro as “just another image model.” It is presenting a system-level attempt to handle both directions of multimodal work in one family:

- image understanding

- text-conditioned image generation

Source:

- Janus official repository: https://github.com/deepseek-ai/Janus

Janus Pro at a Glance

| Question | Janus Pro framing | |---|---| | Core theme | Unified multimodal understanding and generation | | Architectural angle | Decoupled visual encoding | | Main value | One family that handles seeing and generating | | Best evaluation mode | Bidirectional multimodal workflows |

Why the Architecture Matters

Most multimodal product discussion gets flattened into one question:

“How good are the outputs?”

But with Janus Pro, the more useful engineering question is:

“How is the model organized so that understanding and generation do not destroy each other?”

The official repo's answer is the decoupled visual pathway idea.

In practical terms, that means DeepSeek is trying to avoid a common tension in multimodal systems:

- one part of the stack wants rich visual semantics for understanding

- another wants generation-friendly representations for image synthesis

Janus Pro treats those as related but not identical problems.

That makes the model interesting not only as a user-facing system, but also as an architectural reference for multimodal model design.

What Janus Pro Is Good For

If you are evaluating Janus Pro seriously, the most useful use cases are the ones that combine understanding and generation workflows, not just isolated “make an image” demos.

Examples:

- image-grounded instruction following

- multimodal agents that need to inspect visual input before responding

- systems that move between recognition and generation

- applied workflows where a model must understand a visual scene and then produce derived content

In other words, Janus Pro becomes more interesting as soon as the workflow is bidirectional.

What It Is Not

A lot of weak coverage turns every multimodal release into one of two oversimplifications:

- “it beats everything”

- “it is an image generator”

The official repo supports neither of those simplistic readings.

A more defensible interpretation is:

Janus Pro is a multimodal architecture worth studying because it tries to unify two hard tasks under one model family without pretending they are literally the same computation problem.

How to Evaluate Janus Pro Well

If you want to compare Janus Pro against other multimodal systems, do not reduce the evaluation to a single visual sample.

A better checklist is:

-

Understanding quality Can it reliably interpret visual inputs in realistic prompts?

-

Generation quality Are the images acceptable for the kind of tasks you actually care about?

-

Instruction following Does it obey multimodal prompts consistently?

-

Transition quality How well does it move from visual understanding to generative response?

-

Operational path Can you actually run or integrate it in a way that fits your infrastructure?

Practical Evaluation Matrix

| Area | What to inspect | |---|---| | Understanding | Does it read images reliably in realistic prompts? | | Generation | Are outputs usable for your actual task, not just demos? | | Instruction following | Does it respect multimodal constraints consistently? | | Transition quality | Can it move naturally from understanding to generation? | | Deployment realism | Can you actually run it inside your workflow? |

That last point matters because a strong research repo is not automatically a smooth production surface.

Why This Model Family Matters

Janus Pro is worth attention because it broadens the DeepSeek story beyond large language reasoning.

DeepSeek is effectively showing two parallel ideas across its model lines:

- with V3 and R1: large-scale language reasoning and efficiency

- with Janus: multimodal architecture design that tries to unify understanding and generation

That makes Janus Pro important even for readers who are not planning to deploy it immediately. It is part of the larger pattern of open model builders pushing on model architecture, not only benchmark scores.

Bottom Line

The most useful way to think about Janus Pro is not:

“Is this the best image model?”

The better question is:

“Is this a serious multimodal architecture worth evaluating for workflows that combine seeing and generating?”

On that question, the answer is clearly yes.

Janus Pro matters because it gives you an open, inspectable example of how one model family can be designed to handle both multimodal understanding and visual generation without collapsing them into the same internal path.

Source

- Janus official repository: https://github.com/deepseek-ai/Janus